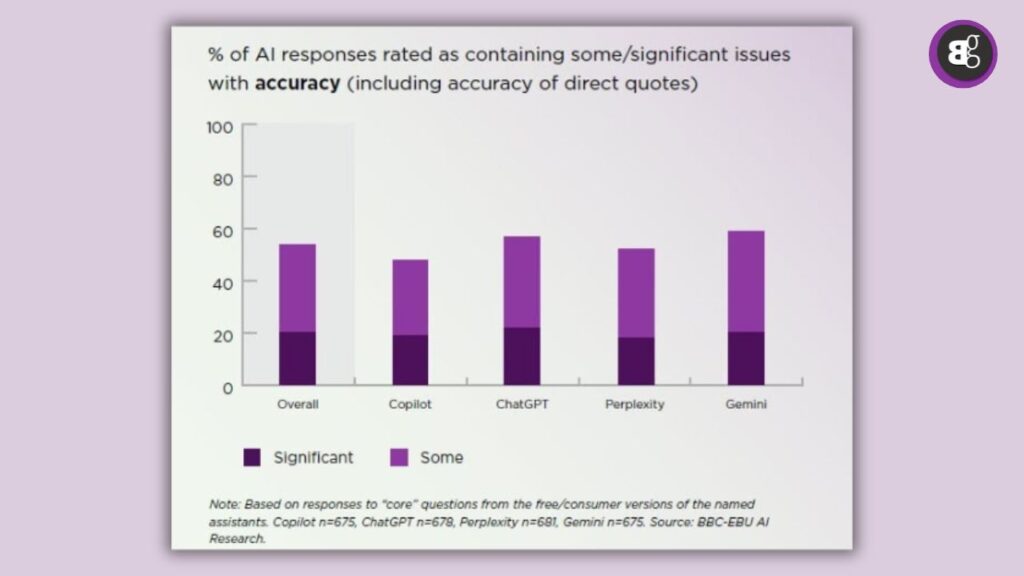

It’s easy to trust a slick chatbot response it seems calm, confident, and quick. But a major new study from European Broadcasting Union (EBU) and BBC shows that nearly 45 % of news-related queries answered by tools like ChatGPT, Microsoft Copilot, Google Gemini and Perplexity contain significant mistakes.

In plain language: nearly one in every two answers you get on important news topics might mislead you. In this post I’ll walk you through what was found, why it happens, what risks this creates and how you can stay safe in a world of AI answers.

No 1. What the numbers actually tell us

The study analysed over 3,000 AI‐generated responses across 14 languages and 18 countries, evaluating them for accuracy, sourcing and whether fact and opinion were correctly distinguished.

Here are the key stats:

-

45% of responses had at least one major issue.

-

81% had some form of problem (even if minor).

-

31–33% had sourcing errors (missing, misleading or wrong attribution).

-

20% included outright inaccurate or outdated information.

-

Some tools performed worse: for example, Gemini showed issues in ~72% of its responses related to sourcing.

These aren’t just little mistakes. They include things like wrong facts about legislation, outdated status of high-profile figures, or a lack of clarity between opinion and fact.

No 2. Why this is happening

Understanding the “why” is important so that we don’t blame the tools unfairly, but also recognise their limits.

A) Large “open-web” training data

These AI assistants are trained on massive datasets that pull from the internet. That means flawed, outdated or biased content gets included and the model doesn’t always know to reject it. The report refers to “poisoned corpus” of data.

B) Sourcing & attribution problems

If the model gives you a statement but can’t reliably say where it came from, you have no way to check. The study found attribution problems are common.

C) Overconfidence and hallucinatory behavior

Because these models present outputs confidently, even when they’re wrong, the risk is high. Users assume correctness. The study noted that answer structure may be appealing, but accuracy varies.

D) Rapid use for tasks beyond simple queries

As people rely on them for news, analysis, legal/financial info or business decisions, the margin for error shrinks. The study warns that public trust may erode.

No 3. What this means for you (and for trust in news & AI)

-

Trust but verify: You can use these assistants, but treat outputs as starting points, not final answers.

-

Democracy & information ecosystem at risk: If many users rely on AI for news, and many answers are flawed, public trust in media and institutions may suffer. The EBU warns about this.

-

Professional use cases need caution: In law, HR, finance, business strategy wrong info could lead to bigger consequences.

-

Opportunity for “trusted corpora” solutions: There’s rising demand for AI tools built on verified, domain-specific data rather than broad open web.

-

Your role becomes more important: Being able to judge, verify, and contextualise becomes a key skill. AI doesn’t replace critical thinking.

Must See: Galileo: The AI Revolution in Human Resources

No 4. How to stay safe & smart when using AI for news or insights

-

Always check: For any important fact you get from an AI tool, go to a reputable source (news site, official document) and cross-verify.

-

Ask “when” and “what changed”: AI tools can give outdated information always check the date and whether things have changed since.

-

Use smarter queries: The better you ask, the better you may get results. But even then, don’t assume perfect accuracy.

-

Know the limitations: If legal, strategic or financial stakes are high, don’t rely solely on a general-purpose AI assistant.

-

Consider specialised tools: For example, AI built for a specific industry with curated data may offer higher reliability.

-

Develop your “source-check” habit: Strong sources matter more than smooth prose. The tool might give a confident answer but you still need to ask why and how do I know.

Read More: AI Gets It Wrong: The Skills You Need to Stay Safe

No 5. The Outlook: Will things get better?

Yes they must. The study comes with recommendations for AI developers: clearer sourcing, timestamping, distinguishing fact vs opinion, transparency around data usage. But it may not happen overnight. As AI platforms race to monetize and grow, data-quality might lag. Meanwhile, users and enterprises need to take responsibility.

For the content and marketing world (yours truly included), this means: don’t assume an AI-tool’s suggestions are flawless. Your judgement, your verification processes and your ethical standards still matter a lot.

Conclusion

In short: These tools are powerful and increasingly indispensable but carving out an assumption of accuracy is risky. That 45 % figure from the BBC/EBU study is a wake-up call.

Whether you’re a writer, marketer, analyst or everyday user, you must treat AI-generated news responses as helpful prompts, not gospel. The trust we place in tools is still human-shapedand in a world of information overload, our critical thinking remains irreplaceable.